Hello !

I’m working on an automatic and “easy” task to transfert my finished downloads to Amazon via rclone.

So, I created this topic to share what I’ve done and ask you helps to improve it !

What I’ve done :

-

Installing rclone : http://rclone.org/install/

-

Changing /etc/lshell.conf to permit rtorrent users to use rclone command :

-

Connecting with your rtorrent user (dl for me) and create the new remote for amazon drive (or other cloud provider) :

How to configure your rclone’s Amazon remote : http://rclone.org/amazonclouddrive/

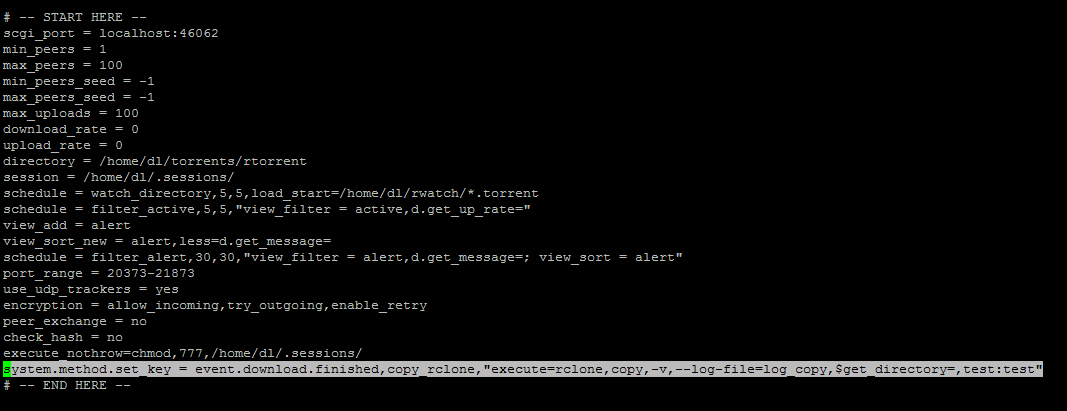

- Adding this line in the .rtorrent.rc file of your rtorrent’s user :

system.method.set_key = event.download.finished,copy_rclone,“execute=rclone,copy,-v,–log-file=log_copy,$get_directory=,test:test”

You will need to change “test:test” by your rclone’s remote previously configured

You could just enter “remote_name:” (“test:” in my example), it will copy your finished torrent to the root path of your rclone’s remote.

- Reloading rtorrent

- Downloading a torrent and check the logfile named “log_copy” in your rtorrent’s user home directory if all is ok

- It works !

So now, I need help to improve it because here’s what happened when a download is finished :

It launch command : rclone copy -v -log-file=log_copy $get_directory= test:test

My problem is the “$get_directory=” variable which give me, in my case, /home/dl/torrents/rtorrent, so it will copy all new files contained in /home/dl/torrents/rtorrent even those are not finished to download.

What I would like :

I created many folders in my /home/dl/torrents/rtorrent (music, movies, shows, …) and I would like to found the variable which could give me the folder where I put my torrent (ex: /home/dl/torrents/rtorrent/music).

I already try those variables :

$d.directory_base

$d.get_directory

$d.get_directory_base

$directory.default

$get_directory

$d.base_path

$d.get_base_path

$directory.default and $get_directory will copy in the folder I want (ex: If I put a torrent to download to /home/dl/torrents/rtorrent/music, it will copy to test:test/music/), others will copy in the root folder of my rclone’s remote.

More details : My rtorrent.rc file · GitHub

I think that I must change too “test:test” by something like “test:test/$get_directory=” (already try but it will create a folder named $get_directory=) to not scan all the rclone’s remote.

The goal is to gain time, scanning many TB take several time.

I don’t know if I’m clear in my request (my english is really not perfect), let me know if you need more explanations.